Posts about TanStack AI.

Try the experimental TanStack AI orchestration PR build: generator-based workflows, typed agent calls, approvals, SSE streaming, AG-UI events, and React hooks.

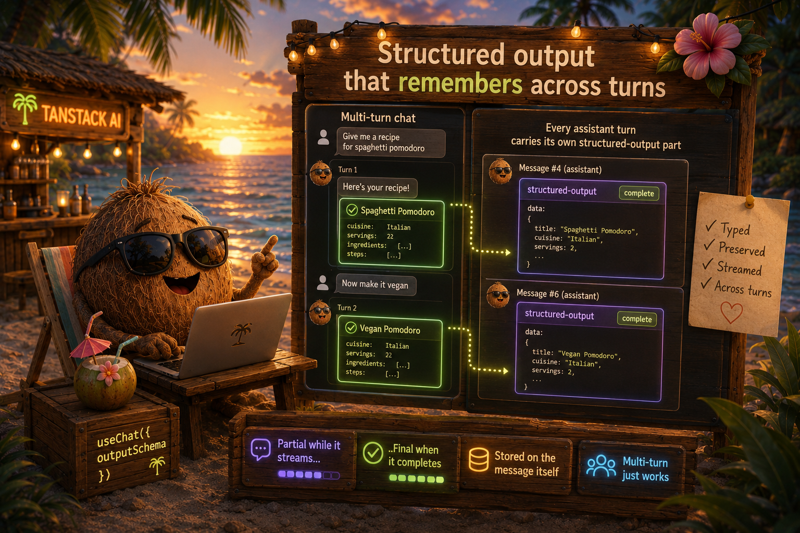

useChat({ outputSchema }) used to keep one slot for partial/final, so multi-turn structured chats lost prior turns the moment a new one streamed in. Every assistant turn now carries its own typed StructuredOutputPart on its UIMessage. History is preserved by default, and the schema generic threads all the way down to messages[i].parts[j].data.

Server-to-client AG-UI events already worked. TanStack AI now completes the round trip with client-to-server AG-UI compliance. Fully backward compatible.

Pass a Zod schema to useChat and get a typed `partial` and `final` for free. No more parsePartialJSON glue, no more onChunk wiring. TanStack AI now streams structured output end-to-end across OpenAI, OpenRouter, Grok, Groq, and Ollama.

TanStack AI adds a new generateAudio activity with streaming, plus fal and Gemini Lyria adapters for music, sound effects, text-to-speech, and transcription. One typed API, any provider.

Your AI pipeline is a black box: a missing chunk, a middleware that doesn't fire, a tool call with mystery args. TanStack AI now ships pluggable, category-toggleable debug logging across every activity and adapter. Flip one flag and the pipeline prints itself.

Provider tools like web search and code execution are supported on some models and silently ignored on others. TanStack AI now gates them per model at the type level, so incompatible pairings fail at compile time instead of in production.

TanStack AI runs 147 deterministic E2E tests across 7 LLM providers in under 2 minutes. Here's the testing infrastructure that makes it possible.

One tool call at a time is the bottleneck. TanStack AI Code Mode lets the LLM write and execute TypeScript programs in secure sandboxes, composing your tools with loops, conditionals, and Promise.all in a single shot.

Every tool definition costs tokens and eats into the context window. Past a certain point it actually makes the model worse. Today we're shipping lazy tool discovery in TanStack AI.

Your chat endpoint starts simple, then you need logging, filtering, caching, rate limiting, and suddenly it's a 200-line monster. TanStack AI now ships a first-class middleware system.

Text-based chat is table stakes. TanStack AI now ships first-class support for realtime voice conversations — real voice, real time, with the AI hearing you, thinking, and responding naturally.

Chat is just the beginning. Your AI app needs image generation, text-to-speech, transcription, and more. TanStack AI now ships generation hooks — a unified set of React hooks for every non-chat AI activity.

Instead of one monolithic adapter that does everything, we split into smaller adapters each in charge of a single functionality. Here's why that architectural change matters.

We spent eight days building an API we had to kill. One function to rule them all, one function to control all adapters, one function to make it all typesafe — here's what happened.

Two weeks since the first alpha and we've prototyped through 5-6 internal architectures. Alpha 2 brings every modality, better APIs, and smaller bundles.

The current AI landscape has a problem — pick a framework, pick a cloud provider, and suddenly you're locked in. TanStack AI is a framework-agnostic toolkit built for developers who want control.